Lpdance

従概要

-

設立日 19 de 10月 de 2005

-

分野 AloShigoto Pro

会社概要

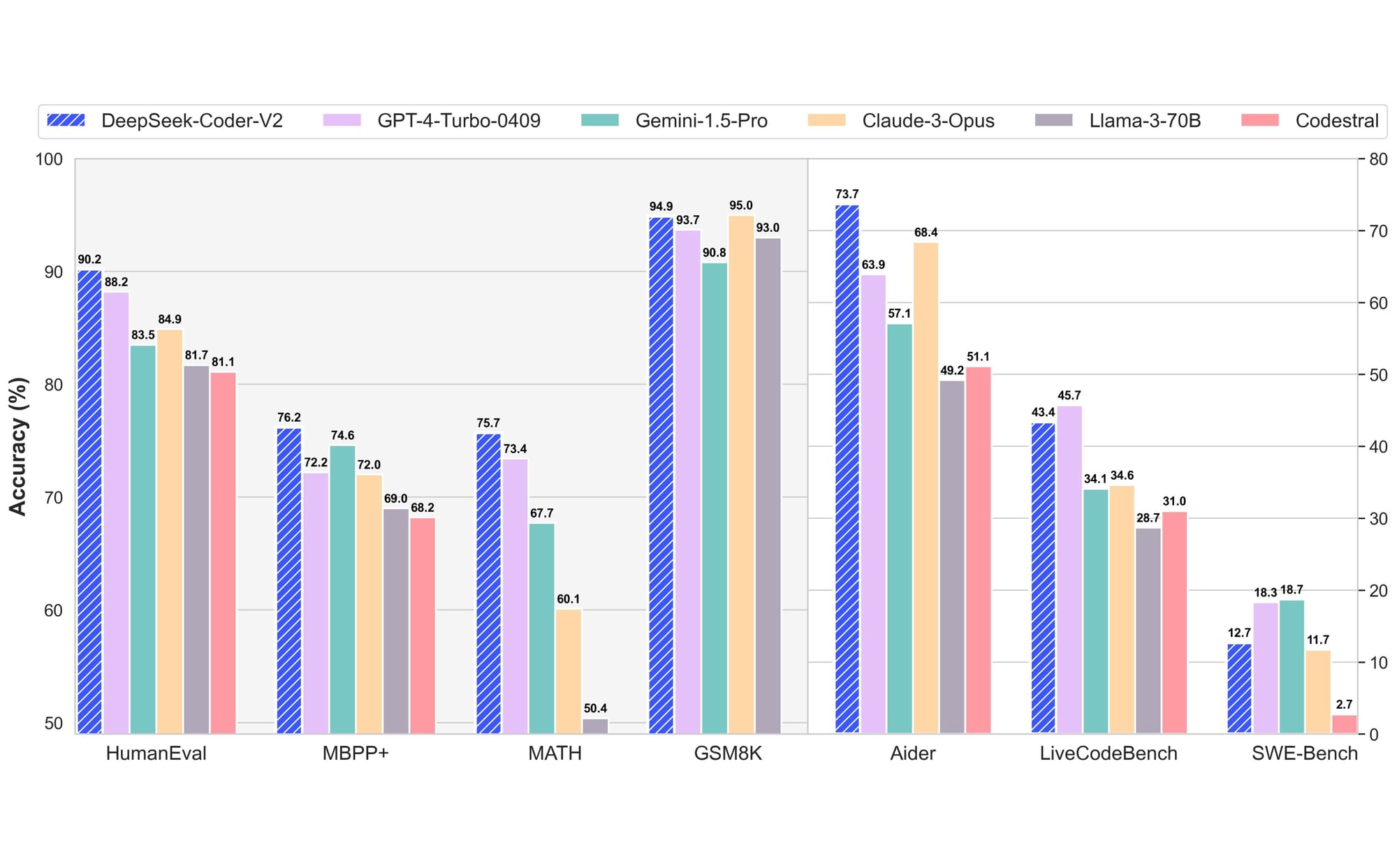

I Tested DeepSeek’s R1 and V3 Coding Skills – and we’re not All Doomed (Yet).

DeepSeek took off into the world’s consciousness this past weekend. It stands apart for 3 powerful factors:

1. It’s an AI chatbot from China, instead of the US

2. It’s open source.

3. It utilizes significantly less facilities than the huge AI tools we’ve been taking a look at.

Also: Apple scientists reveal the secret sauce behind DeepSeek AI

Given the US federal government’s concerns over TikTok and possible Chinese federal government participation in that code, a brand-new AI emerging from China is bound to generate attention. ZDNET’s Radhika Rajkumar did a deep dive into those problems in her post Why China’s DeepSeek could break our AI bubble.

In this short article, we’re avoiding politics. Instead, I’m putting both DeepSeek V3 and DeekSeek R1 through the exact same set of AI coding tests I have actually tossed at 10 other big language models. According to DeepSeek itself:

Choose V3 for tasks requiring depth and precision (e.g., fixing innovative math problems, generating intricate code).

Choose R1 for latency-sensitive, high-volume applications (e.g., client assistance automation, standard text processing).

You can pick in between R1 and V3 by clicking the little button in the chat user interface. If the button is blue, you’re using R1.

The short response is this: impressive, but clearly not perfect. Let’s dig in.

Test 1: Writing a WordPress plugin

This test was really my very first test of ChatGPT’s shows expertise, method back in the day. My wife required a plugin for WordPress that would assist her run a participation gadget for her online group.

Also: The very best AI for coding in 2025 (and what not to use)

Her requirements were relatively basic. It needed to take in a list of names, one name per line. It then had to sort the names, and if there were replicate names, different them so they weren’t noted side-by-side.

I didn’t truly have time to code it for her, so I chose to give the AI the difficulty on a whim. To my big surprise, it worked.

Ever since, it’s been my very first test for AIs when evaluating their programming skills. It requires the AI to understand how to set up code for the WordPress structure and follow prompts plainly adequate to develop both the user interface and program logic.

Only about half of the AIs I have actually checked can completely pass this test. Now, nevertheless, we can add one more to the winner’s circle.

DeepSeek V3 produced both the user interface and program logic exactly as defined. As for DeepSeek R1, well that’s an interesting case. The “thinking” element of R1 triggered the AI to spit out 4502 words of analysis before sharing the code.

The UI looked various, with much broader input areas. However, both the UI and reasoning worked, so R1 likewise passes this test.

Up until now, DeepSeek V3 and R1 both passed one of four tests.

Test 2: Rewriting a string function

A user complained that he was unable to enter dollars and cents into a contribution entry field. As composed, my code only permitted dollars. So, the test involves offering the AI the routine that I wrote and asking it to reword it to enable both dollars and cents

Also: My favorite ChatGPT got method more effective

Usually, this results in the AI generating some routine expression validation code. DeepSeek did create code that works, although there is space for improvement. The code that DeepSeek V2 wrote was unnecessarily long and repetitious while the thinking before creating the code in R1 was also long.

My most significant concern is that both models of the DeepSeek validation makes sure recognition as much as 2 decimal locations, however if a large number is entered (like 0.30000000000000004), using parseFloat doesn’t have explicit rounding understanding. The R1 model likewise used JavaScript’s Number conversion without looking for edge case inputs. If bad information comes back from an earlier part of the routine expression or a non-string makes it into that conversion, the code would crash.

It’s odd, because R1 did provide a really nice list of tests to confirm against:

(1).pngL.jpg)

So here, we have a split decision. I’m giving the indicate DeepSeek V3 due to the fact that neither of these issues its code produced would trigger the program to break when run by a user and would produce the anticipated outcomes. On the other hand, I have to offer a fail to R1 since if something that’s not a string somehow gets into the Number function, a crash will take place.

And that provides DeepSeek V3 two triumphes of 4, but DeepSeek R1 just one win out of 4 up until now.

Test 3: Finding a bothersome bug

This is a test created when I had an extremely frustrating bug that I had difficulty tracking down. Once once again, I chose to see if ChatGPT might handle it, which it did.

The obstacle is that the response isn’t obvious. Actually, the challenge is that there is an apparent answer, based upon the mistake message. But the obvious response is the wrong answer. This not just caught me, however it regularly catches a few of the AIs.

Also: Are ChatGPT Plus or Pro worth it? Here’s how they compare to the totally free version

Solving this bug requires comprehending how specific API calls within WordPress work, having the ability to see beyond the error message to the code itself, and then knowing where to find the bug.

Both DeepSeek V3 and R1 passed this one with nearly similar answers, bringing us to 3 out of 4 wins for V3 and 2 out of 4 wins for R1. That already puts DeepSeek ahead of Gemini, Copilot, Claude, and Meta.

Will DeepSeek score a home run for V3? Let’s discover out.

Test 4: Writing a script

And another one bites the dust. This is a difficult test due to the fact that it needs the AI to understand the interaction between 3 environments: AppleScript, the Chrome item design, and a Mac scripting tool called Keyboard Maestro.

I would have called this an unreasonable test due to the fact that Keyboard Maestro is not a mainstream programming tool. But ChatGPT managed the test quickly, understanding exactly what part of the problem is managed by each tool.

Also: How ChatGPT scanned 170k lines of code in seconds, saving me hours of work

Unfortunately, neither DeepSeek V3 or R1 had this level of understanding. Neither design understood that it needed to split the job between guidelines to Keyboard Maestro and Chrome. It also had fairly weak understanding of AppleScript, composing customized routines for AppleScript that are belonging to the language.

Weirdly, the R1 design failed also because it made a bunch of incorrect presumptions. It presumed that a front window always exists, which is absolutely not the case. It likewise made the assumption that the currently front running program would always be Chrome, rather than clearly checking to see if Chrome was running.

This leaves DeepSeek V3 with three correct tests and one fail and DeepSeek R1 with two appropriate tests and two stops working.

Final thoughts

I found that DeepSeek’s insistence on using a public cloud e-mail address like gmail.com (instead of my normal email address with my business domain) was bothersome. It likewise had a variety of responsiveness stops working that made doing these tests take longer than I would have liked.

Also: How to utilize ChatGPT to write code: What it does well and what it doesn’t

I wasn’t sure I ‘d have the ability to compose this short article because, for the majority of the day, I got this error when attempting to register:

DeepSeek’s online services have recently dealt with massive harmful attacks. To make sure ongoing service, registration is momentarily limited to +86 telephone number. Existing users can visit as normal. Thanks for your understanding and support.

Then, I got in and had the ability to run the tests.

DeepSeek seems to be excessively chatty in terms of the code it creates. The AppleScript code in Test 4 was both incorrect and exceedingly long. The routine expression code in Test 2 was correct in V3, but it could have been written in a method that made it far more maintainable. It failed in R1.

Also: If ChatGPT produces AI-generated code for your app, who does it really belong to?

I’m absolutely impressed that DeepSeek V3 beat out Gemini, Copilot, and Meta. But it seems at the old GPT-3.5 level, which suggests there’s definitely room for improvement. I was disappointed with the outcomes for the R1 design. Given the choice, I ‘d still pick ChatGPT as my programs code assistant.

That said, for a brand-new tool working on much lower facilities than the other tools, this might be an AI to enjoy.

What do you believe? Have you tried DeepSeek? Are you using any AIs for programming assistance? Let us know in the comments below.

You can follow my daily job updates on social media. Make sure to register for my weekly update newsletter, and follow me on Twitter/X at @DavidGewirtz, on Facebook at Facebook.com/ DavidGewirtz, on Instagram at Instagram.com/ DavidGewirtz, on Bluesky at @DavidGewirtz. com, and on YouTube at YouTube.com/ DavidGewirtzTV.